AI Defense-in-Depth Model - Surprising Realities of the "Agentic" Era

Is your AI investment truly enhancing your company's intelligence, or merely accelerating individual tasks? Many organizations perceive themselves as pioneers in innovation, yet only 19% possess complete visibility into the utilization of AI within their development lifecycle. This blog will dissect the three challenging phases of enterprise adoption, highlighting why most teams falter at the initial stage. This often results in valuable institutional knowledge being confined to individual chat histories, which vanish when a key employee departs. I will uncover the hidden risks that traditional security overlooks, including "lossy" orchestration that causes downstream errors and subtle data poisoning that may not disrupt your training but can manipulate it to serve a threat actor's agenda. It's crucial to stop equating an expanded Copilot seat with genuine "cognitive infrastructure." The focus must shift from exploratory "vibe" work to a secure, orchestrated system that preserves both value and security across all teams and projects.

4/12/20266 min read

Dawn Creter, AI and Cloud Cybersecurity Product Leader

The Great AI Visibility Delusion

The AI revolution, Phase 1, the Productivity Layer—is effectively complete, yet it has left enterprise leaders in a state of dangerous overconfidence. Organizations have spent the last two years aggressively expanding Copilot seats and ChatGPT licenses, driving individual output to record highs. But while individual employees are drafting code and summarizing documents at lightning speed, institutional intelligence is hitting a wall.

As a strategist, I observe that most organizations are currently stuck in what we call the Productivity Layer. This phase creates the illusion of progress through "hours saved" metrics and impressive board demos, but it fails to make the institution smarter. When a high-performing employee who has embedded AI into their daily workflow leaves, the organization retains nothing. The intelligence lived in a private chat history, not the corporate architecture. Architecture is now destiny, and right now, most firms are simply subsidizing individual productivity rather than building institutional intelligence.

The "vibe" of progress is masking a systemic loss of control. According to Cycode’s 2026 State of Product Security report, only 19% of organizations have full visibility into where and how AI is used across their development lifecycle. Even more alarming, 52% of organizations admit they lack any formal governance framework for AI adoption. As we move from "personal assistants" to autonomous agentic networks, the gap between usage and oversight isn't just a hurdle; it’s a liability that scales only in its capacity to fail.

The AIBOM is the New Standard for Survival

In an era where models are updated and datasets are swapped daily, manual tracking is no longer just obsolete—it’s a compliance death wish. The AI Bill of Materials (AIBOM) has emerged as the essential inventory for any organization hoping to survive a regulatory audit or a security breach. Unlike a traditional SBOM, which tracks static libraries, an AIBOM is a dynamic record designed to catch "Shadow AI" and detect the subtle "drift" of agentic behavior.

An effective AIBOM must be automated and capture every component influencing the system's output:

AI Models: Type, version, source, and full update history.

Datasets: Provenance and licensing for training, fine-tuning, and inference.

Prompts: System prompts, templates, and the specific constraints governing behavior.

Dependencies: Open-source libraries, frameworks, and third-party APIs.

Infrastructure: Cloud services, containers, and runtimes where the agent lives.

Controls: Policy-based validations and real-time guardrails.

Without an automated AIBOM, the "institutional memory" required for true AI maturity is technically impossible to audit.

"An AIBOM provides a traceable and organized record of how each AI system was developed, trained, validated, and deployed throughout its lifecycle."

Moving Beyond "Stateless" Agents: The Quest for Institutional Memory

Most organizations are currently lying to themselves about their AI maturity. They point to high adoption numbers as proof of advancement, but the reality is that most are stalled in the Productivity Layer. The true diagnostic of the "Agentic Era" is the Adoption Curve:

Phase 1 (Productivity): AI makes individuals faster, but the organization doesn't get smarter. When a high performer leaves, their personal prompts and chat history vanish with them.

Phase 2 (Workflow Automation): AI owns tasks, but agents are stateless. Every session resets, meaning the agent "forgets" yesterday’s decisions and fails to learn from previous projects.

Phase 3 (Orchestration): Knowledge is retained and shared across time, teams, and projects.

The transition from Phase 1 (Productivity) to Phase 3 (Institutional Orchestration) is where most enterprises fail. In the current landscape, many AI agents operate as "stateless" entities. They are, in effect, brilliant contractors who reset every single day. They retain no memory of yesterday’s decisions, previous project context, or the patterns solved by other teams months ago.

Without a Context Intelligence Graph that retains "institutional memory," every AI session starts from zero. This statelessness is not just a productivity ceiling—it is a security blind spot. When an agent resets daily, it becomes nearly impossible to track model drift or identify subtle logic triggers inserted by adversaries over time. Real transformation requires an orchestration layer that ensures the organization learns from every interaction, turning ephemeral chats into a permanent corporate asset.

2026 demands an "orchestration layer" that solves the statelessness problem. Without a system that retains context, routing complexity grows quadratically, and agents operate in silos. Adding more Copilot seats won't bridge this gap; it only subsidizes individual work while the institution's collective intelligence remains stagnant.

Confidential AI: The Hardware "Fortress" for Sensitive Data

As AI workloads move to the edge and integrate more sensitive data, traditional perimeter security is proving insufficient. We are seeing the rise of Confidential AI, which utilizes hardware-based secure enclaves to protect data while it is being processed. This is the only true Zero Trust model for AI, as it protects data "in use," rather than just in transit or at rest.

The lynchpin of this architecture is remote attestation—the ability for a client to cryptographically verify an enclave's safety before any data transfer occurs. Confidential Computing is a powerful deterrent, shutting out at least 77 of 277 MITRE attack techniques and addressing 7 of the OWASP LLM Top 10 threats.

"Confidential Computing creates fully-isolated hardware-based secure enclaves that cannot be infiltrated, locking out rogue insiders from your own company as well as the cloud service provider."

As enterprises integrate proprietary data into AI agents, traditional encryption is proving insufficient. Encryption typically fails when data is "in use" because it must be decrypted in memory, leaving it vulnerable to memory-dumping attacks by privileged insiders.

"Confidential AI" addresses this by utilizing secure enclaves—hardware-level isolation that creates a fortress for sensitive data and models. This provides true zero-trust computing, ensuring that not even a cloud admin with root access can peek at your inference inputs. While alternatives like Fully Homomorphic Encryption (FHE) are mathematically elegant, they are often 1,000,000x slower than unprotected computation. In contrast, hardware enclaves provide the only viable enterprise path forward, offering high performance with a mere 0-30% overhead. For regulated industries, this is the only way to utilize cloud-scale AI without surrendering data sovereignty.

The "Hourglass" Workforce and the Rise of the AI Generalist

The emergence of agentic AI is fundamentally restructuring the workforce and the industrial-era trend of increasing specialization is reversing. AI agents are now capable of performing the mid-tier, specialized tasks that once defined a career path—coding in specific languages, invoice matching, or deep data reconciliation. This is hollowing out the middle of the workforce, creating two distinct shapes:

The Hourglass (Knowledge Work): A concentration of AI-savvy junior talent and senior strategists, with a hollowing out of mid-tier specialists whose tasks are now automated.

The Diamond (Front-line Work): Agents replace entry-level task workers, while more mid-level "orchestrators" are required to manage these agentic systems.

We are entering the era of the AI Generalist, an orchestrator who oversees the "vibe coding" of agents and aligns technical architecture with business strategy. These professionals understand enough of the technical architecture and the business strategy to oversee the agents performing the labor. These generalists are the ones who must enforce the AIBOM-driven guardrails, ensuring that the agents they orchestrate align with enterprise priorities.

Human differentiation no longer stems from task execution, but from the ability to manage the "blast radius" of agentic decisions and drive innovation through high-level orchestration.

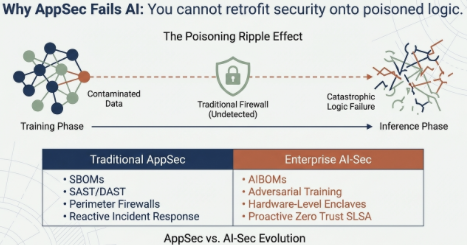

Security as a "First Principle": The Full-Lifecycle Guardrail

Securing AI in 2026 is no longer about defending a data center or point-solution added at the end of development. It’s about securing a distributed, moving target. To survive the rise of Adversarial Machine Learning, organizations must adopt a Secure AI by Design philosophy that must span from the training pipeline to the edge. Organizations must move beyond static firewalls to a defense-in-depth model and requires specific hardening steps at every phase of the lifecycle:

Training: Defending against Data Poisoning. This is the ultimate "silent" attack; it doesn't break the training process, it "bends" it, creating models that embed subtle biases or hidden triggers that only the attacker knows how to exploit.

Development: Hardening against Model Extraction and Inversion, ensuring that proprietary logic cannot be reconstructed by unauthorized users through API probing. Thus, making it economically unfeasible for attackers to reconstruct the model.

Inference: Utilizing real-time input filtering to block Adversarial Inputs. These attacks exploit how models generalize—where a word swap or a single pixel change can flip a model’s classification and bypass safety filters.

"No single firewall, model tweak, or security plugin can secure AI workloads in isolation. You need defense in depth."

Conclusion: From "Vibe" to Value

The era of AI experimentation is over; the orchestration mandate has arrived. Success in 2026 belongs to the disciplined—those who move beyond ground-up crowdsourcing to a top-down enterprise strategy. This requires centralized hubs, or "AI Studios," where reusable components and rigorous governance intersect.

The "vibe coding" and sporadic, ground-up AI experiments is coming to an end. In 2026, the winners will be the organizations that replace "hours saved" metrics with a disciplined, top-down march to institutional value. They will move beyond the stateless productivity layer and into the orchestration layer, where AI agents don't just work—they remember.

As you evaluate your 2026 roadmap, ask yourself one critical question: “When your highest-performing AI-enabled employee leaves tomorrow, will your organization be any smarter, or will it just be back to Phase One?”

If your AI infrastructure does not retain knowledge across projects and teams, you aren't transforming; you're just running faster in place with higher cyber threat risk.